Last year, I've contributed to the Toposophy podcast, which is called "Common Ground". It covers a wide range of topics in urbanism and urban planning, from culture to technology. The episode that I spoke on is dedicated to direct democracy and citizen science, exploring the links between deep engagement forms of citizens in the management … Continue reading Toposophy’s “common ground” podcast episode on citizen science

Tag: VGI

C*Sci 2023 and the new name of the (US) Citizen Science Association

At the end of May, the Citizen Science Association (CSA) celebrated its 10th year anniversary in its conference - C*Sci 2023, held at Arizona State University (ASU) in Tempe, Arizona. The conference (22-26 May) provided the opportunity to meet face-to-face colleagues in the area of citizen science, which I haven't had a chance to talk … Continue reading C*Sci 2023 and the new name of the (US) Citizen Science Association

Nature Reviews Methods Primers paper on citizen science

At the end of September 2021, I received an email from Nature Reviews Methods Primers (NRMP) with an invitation to lead a paper on citizen science. NRMP is a journal that commissions methodological papers on different topics, so they can be used by early career and experienced researchers, to learn about a new methodology and … Continue reading Nature Reviews Methods Primers paper on citizen science

Extreme Citizen Science Analysis and Visualisation – final event

Today is the first day after the end of the European Research Council (ERC) funded "Extreme Citizen Science: Analysis and visualisation". For me, it's an important milestone. In July 2007, Jerome Lewis got in touch with me about the need to develop data collection and visualisation to protect and nurture forest communities. This effort evolved … Continue reading Extreme Citizen Science Analysis and Visualisation – final event

Contours of Citizen Science paper published in Royal Society Open Science

At the end of 2019, just before the pandemic, I was lucky to be hosted and supported by the Centre for Research and Interdisciplinarity in Paris (CRI-Paris.org) and with colleagues from ECSA and the European project EU-Citizen.Science carried out a survey to help identify what are the boundaries of citizen science, in terms of the … Continue reading Contours of Citizen Science paper published in Royal Society Open Science

Would you call this a citizen science activity?

This is an invitation to complete a survey that is available at https://www.surveymonkey.com/r/7XN5MQG This survey will present you stories about different forms of public participation in research. Different activities within this area of public participation in research are now called “citizen science” and we would like to hear what your opinion is about each activity. … Continue reading Would you call this a citizen science activity?

Published: Citizen science and the United Nations Sustainable Development Goals

Back in October 2018, I reported on the workshop at the International Institute for Advanced Systems Analysis (IIASA) about non-traditional data approaches and the Sustainable Development Goals. The outcome of this workshop has now been published in Nature Sustainability. The writing process was coordinated by Dr Linda See of IIASA, and with a distributed process that included … Continue reading Published: Citizen science and the United Nations Sustainable Development Goals

Call for Participation in Vespucci Training School on Digital Transformations in Citizen Science and Social Innovation – January 2019

Apply until 31 October at https://www.cs-eu.net/events/internal/vespucci-training-school-digital-transformations-citizen-science-and-social The Role of Digital Technologies in Engaging Citizens (not only Citizen Scientists) in Social Innovation With the widespread availability of cheap, ubiquitous and powerful tools like the internet, the world-wide-web, social media and smartphone apps, new ways of carrying out both citizen science and social innovation have become possible. Often … Continue reading Call for Participation in Vespucci Training School on Digital Transformations in Citizen Science and Social Innovation – January 2019

Non-traditional data approaches and the Sustainable Development Goals workshop

The workshop took place in IIASA, which is located in Laxenburg in Austria. The workshop was hosted by the earth observation and citizen science group at IASSA. The workshop focus on the interface between citizen science, earth observation, and traditional data collection methods in the context of monitoring and contributing to the Sustainable Development Goals … Continue reading Non-traditional data approaches and the Sustainable Development Goals workshop

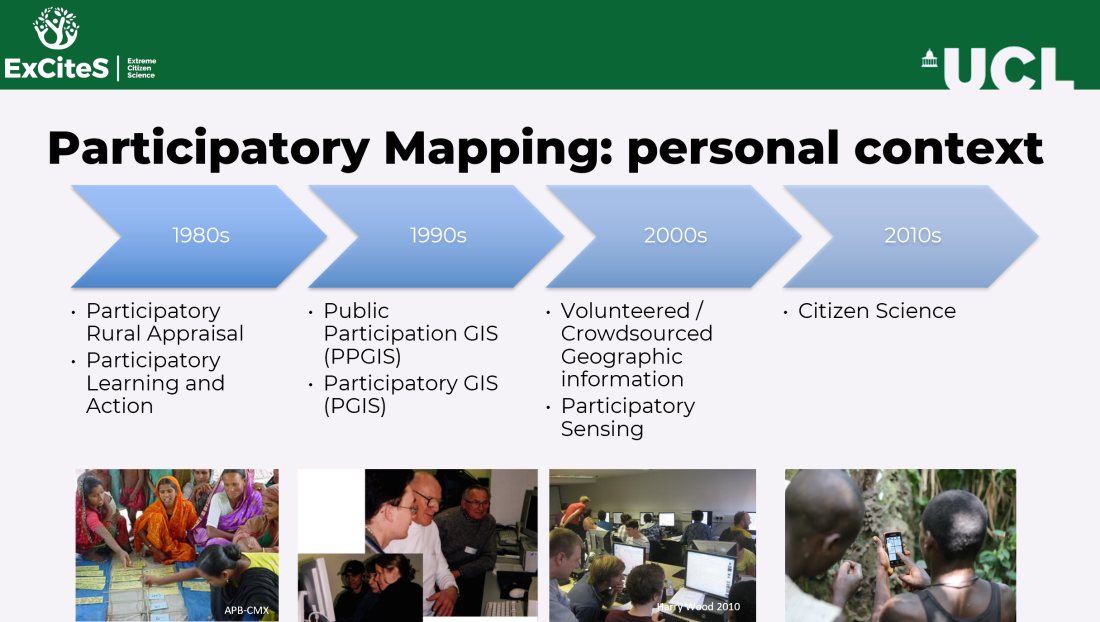

Papers from PPGIS 2017 meeting: state of the art and examples from Poland and the Czech Republic

About a year ago, the Adam Mickiewicz University in Poznań, Poland, hosted the PPGIS 2017 workshop (here are my notes from the first day and the second day). Today, four papers from the workshop were published in the journal Quaestiones Geographicae which was established in 1974 as an annual journal of the Faculty of Geographical and Geological … Continue reading Papers from PPGIS 2017 meeting: state of the art and examples from Poland and the Czech Republic