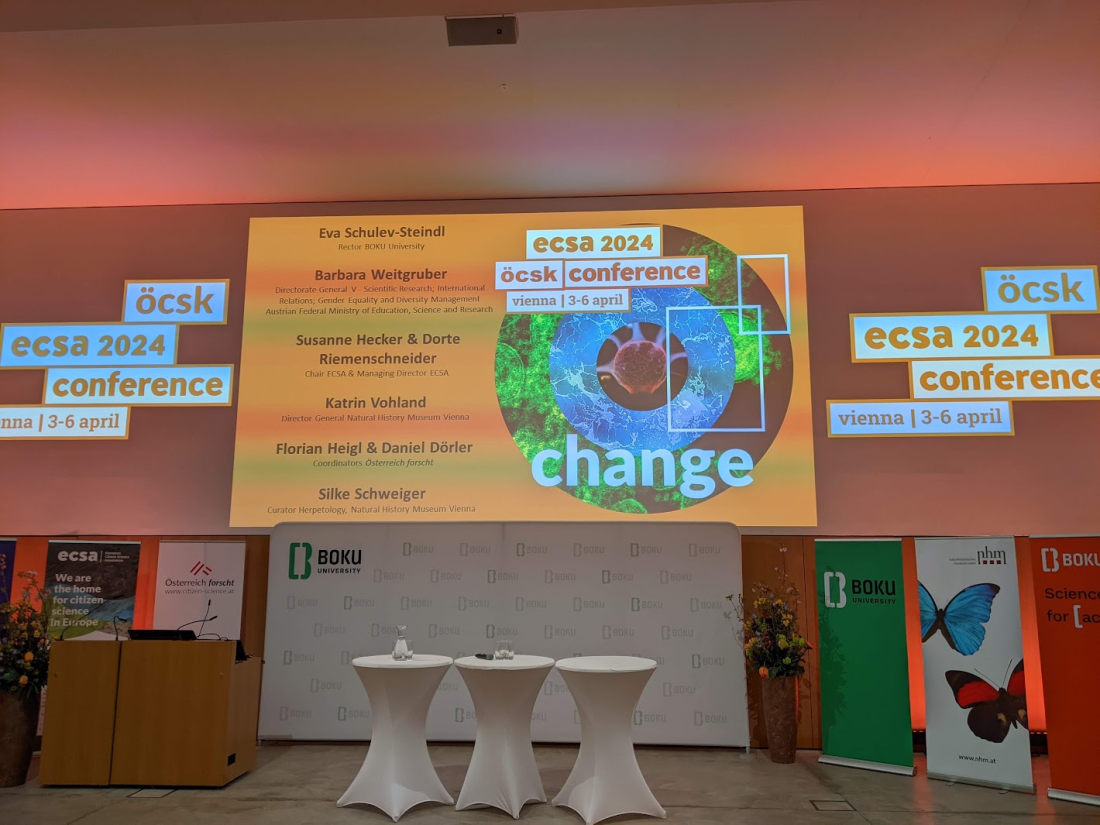

The European Citizen Science Association is celebrating 10 years, and the bi-annual conference is dedicated to change. The opening session at the BOKU university. Opened by the rector Eva Schulev-Stiendl. She noted that citizen science is a powerful tool for democratising science. Empowering individuals and communities to be involved in science. At BOKU there is … Continue reading Notes from ECSA 2024 conference – Day 1 opening session

Tag: Environmental information

Extreme Citizen Science Analysis and Visualisation – final event

Today is the first day after the end of the European Research Council (ERC) funded "Extreme Citizen Science: Analysis and visualisation". For me, it's an important milestone. In July 2007, Jerome Lewis got in touch with me about the need to develop data collection and visualisation to protect and nurture forest communities. This effort evolved … Continue reading Extreme Citizen Science Analysis and Visualisation – final event

Notes from thematic session on the promotion of the principles of the Convention in international forums, 23 June, 2002

Information from the session that is stated at https://unece.org/sites/default/files/2022-06/WGP-26_Programme_PPIF_session.pdf "The thematic session on the promotion of the principles of the Aarhus Convention in international forums will include panel presentations and round table discussion on rules of procedure and practice with regard to access to information and public participation in the international decision-making on legally binding … Continue reading Notes from thematic session on the promotion of the principles of the Convention in international forums, 23 June, 2002

Roundtable on environmental defenders – Aarhus convention ExMOP

Notes from the roundtable on environmental defenders. I have not tried to capture the exact words of the presenters. This is part of the Aarhus ExMOP from 24 June 2022. The chair of the session, Teresa Weber, noted that the appointment of the special rapporteur on environmental defenders, elected yesterday is a way in which … Continue reading Roundtable on environmental defenders – Aarhus convention ExMOP

Thematic session on access to information (UNECE Aarhus convention – working group of parties)

UN Geneva Peacock These notes are from a session which is part of the working group meeting of the parties of the Aarhus Convention on 22 June 2022. It's only part of the meeting, which the full details of can be found on the UNECE site. The thematic session on access to information focused on … Continue reading Thematic session on access to information (UNECE Aarhus convention – working group of parties)

Engaging Citizen Science – Dick Kasperowski keynote: scientific and civic engagement in citizen science: time to talk about societal effects?

Dick talk - scientific and civic engagement in citizen science: time to talk about societal effects? Talking from a Swedish perspective to explore if the issues are generic. There are some disturbing issues - paradoxes, inequalities, and gender issues. Democracy: Infrastructures, how trust plays out and how CS is used at "the limits of the … Continue reading Engaging Citizen Science – Dick Kasperowski keynote: scientific and civic engagement in citizen science: time to talk about societal effects?

Conference notes: Engaging Citizen Science – Aarhus. Keynote Heidi Ballard

The Engaging Citizen Science conference is organised by the Danish citizen science network and took place at Aarhus University (25-26 April). The conference started with a keynote by Heidi Ballard, UC Davis. Gitte Kragh starting the conference Challanges Heidi Ballard - engaging communities. Multiple crises: Covid-19, climate change, racial injustice, refugee crisis. Young people feel … Continue reading Conference notes: Engaging Citizen Science – Aarhus. Keynote Heidi Ballard

CSA law and policy group: the Aarhus Convention and Citizen Science

This is the recording from earlier this month from a meeting of the Citizen Science Association (CSA) meeting of the law and policy working group which was dedicated to Aarhus convention and its relevance to citizen science. The main speakers in the session were Lea Shanley, who co-chair the group, Anna Berti Suman (check her … Continue reading CSA law and policy group: the Aarhus Convention and Citizen Science

Recording of a GEO6 Webinar – data and value knowledge creation

This is the recording of a webinar that was dedicated to chapters 3 and 25 of the Global Environment Outlook. It covers different sources of data, including citizen science and indigenous knowledge. Presented by • James Donovan, CEO, ADEC Innovations • Charles Mwangi, Deputy Country Coordinator for the GLOBE Program in Kenya • Jillian Campbell, … Continue reading Recording of a GEO6 Webinar – data and value knowledge creation

Citizen Science 2019: Citizen Science in Action: A Tale of Four Advocates Who Would Have Lost Without You

Jessica Culpepper (Public Justice), Larry Baldwin (Crystal Coast Waterkeeper), Matt Helper (Appalachian Voices), Michael Krochta (Bark). Jessica - there can be a disconnect between the work on the ground and how it is used in advocacy. On how to use the information to make the world a better place, and hold polluters to account. First, Michael Krochta (Bark) from … Continue reading Citizen Science 2019: Citizen Science in Action: A Tale of Four Advocates Who Would Have Lost Without You